Software that Learns by Watching

Overworked and much in demand, IT support staff can't be in two places at once. But software designed to watch and learn as they carry out common tasks could soon help--by automatically performing the same jobs across different computers.

The new software system, called KarDo, was developed by researchers at MIT. It can automatically configure an e-mail account, install a virus scanner, or set up access to a virtual private network, says MIT's Dina Katabi, an associate professor at MIT.

Crucially, the software just needs to watch an administrator perform this task once before being able to carry out the same job on computers running different software. Businesses spend billions of dollars each year on simple and repetitive IT tasks, according to reports from the analyst groups Forrester and Gartner. KarDo could reduce these costs by as much as 20 percent, Katabi says.

In some respects, KarDo resembles software that can be used to record macros--a set sequence of user actions on a computer. But KarDo attempts to learn the goal of each action in the sequence so it can be applied more generally later, says MIT post-graduate Hariharan Rahul, who codeveloped the system.

When IT staff want KarDo to learn a new task, they press a "start" button beforehand and a "stop" button afterwards. During a "learning phase," KarDo will attempt to map each of the actions performed in the graphical user interface, such as clicking on particular icons or buttons, with system-level actions, such as starting or closing a program, or opening a Web page. This allows a task to be applied across machines running different software, says Katabi. "I can go to my desktop, click on the Internet Explorer icon, go to a website, and then click on a particular link to download a file," she says. The same actions could then be applied by KarDo on a machine running a different Web browser like FireFox or Chrome. KarDo compares actions performed during the learning phase with a database of other tasks.

KarDo is able to reliably infer how to reproduce each of the subtasks after watching it being performed just once, says Rahul. For example, after watching an e-mail account being set up using Microsoft Outlook, it can do the same on other computers running different e-mail software. KarDo has been tested on hundreds of combinations of real tasks by IT staff at MIT and was found to get tasks right 82 percent of the time. When KarDo doesn't perform a task correctly, the results aren't serious, Katabi says.

The ultimate goal is for KarDo to intervene completely automatically, although this has not yet been tested. The idea is that when a user sends a request to the IT department , KarDo would perform the task automatically.

This sort of "programming by demonstration" is not a new idea, says Stephen Muggleton, an expert in machine learning at Imperial College London. But the approach has remained very much a research curiosity, he says. "An obvious concern from a user point of view will be the accuracy of the learned model," says Muggleton. Normally it takes relatively large amounts of data to generate error-free machine learning models, he notes.

"There's a great deal of promise in learning procedures and plans by watching," says Eric Horvitz of Microsoft Research in Redmond, WA. However, in general, this is very challenging to pull off. It is usually hard to do anything useful without constraining the nature of the task, says Horvitz.

KarDo was announced last week as the winner of the Web/IT track of MIT's $100K Entrepreneur Competition.

What's in a Tweet?

Researchers at the Palo Alto Research Center (PARC) are developing new ways to deal with the torrent of information flowing from social media sites like Twitter. They have developed a Twitter "topic browser" that extracts meaning from the posts in a user's timeline. This could help users scan through thousands of tweets quickly, and the underlying technology could also offer novel ways of mining Twitter for information or for creating targeted advertising.

The researchers' idea was to provide a way for users to deal with a large number of Twitter messages quickly. They found that many users wanted to be able to quickly catch up on what's been going on, without having to go through every single tweet in their timeline.

Ed Chi, area manager and principal scientist for the Augmented Social Cognition Research Group at PARC, says that the information coming through Twitter resembles a stream--users will dip into it from time to time, but they don't want to consume it all at once. His group's work is called the "Eddi Project" in reference to the idea of eddies in a stream.

The researchers developed two main ways of filtering Twitter content. The first, presented recently at the ACM Conference on Human Factors in Computing Systems in Atlanta, is a recommendation system that ranks which posts in a Twitter stream a user is likely to find most interesting, based on factors such as the contents of posts as well as his interactions with other Twitter users. The second tool, the Twitter topic browser, summarizes the contents of a user's timeline so that the user can quickly survey what information has come through Twitter without having to read through every post.

To create this second tool, the researchers focused on identifying the topic of each tweet. Michael Bernstein, a researcher at the Computer Science and Artificial Intelligence Lab at MIT who is involved with the project, says the group found that Twitter users were interested in filtering posts relating to specific topics, and said they found existing methods lacking. "Hashtags"--user-generated annotations that categorize tweets--are perhaps the best current option, but most tweets don't have these tags. Bernstein notes that Twitter, Google, and other companies are developing ways to identify and categorize the most popular topics of discussion on Twitter--such the Icelandic volcano. But the sheer volume of tweets provides a lot of information for algorithms to use; it's much harder, he says, to figure out the topic of tweets that are more unique.

A key challenge of extracting meaning from a tweet is its length: no more than 140 characters. Chi says that most natural language processing technology relies on having a larger sample of text to work with. For example, some methods rely on people writing out associations between terms, which requires a lot of work to maintain, and is not the best way to interpret real-time information.

The researchers realized, however, that search engines have been dealing with extracting meaning from a small number of words--in the form of search queries--for years.

"The essence of the approach is to coerce a tweet to look more like a search query and then get a search engine to tell us more," Bernstein says. The researchers first clean up a tweet by pulling common terms, like the Twitter slang "RT," which means "retweet." Once their algorithms have focused on likely significant terms, they feed those into the Yahoo's Build your Own Search Service interface--a Web service that can be used to tap directly into Yahoo's search result.

The Web is the most up-to-date source of data, Bernstein says, and the pages that come up in search results give enough information for the researchers' algorithms to produce a list of topics related to the original tweet.

A similar approach could be used with any repository of information, Chi notes, pointing out that companies could use the technology on an intranet to classify bits of information related to more specialized topics.

"Boosting the signal of a tweet by piping it through web search is an application of a well-established information-retrieval technique," says Daniel Tunkelang, an engineer at Google who is an expert on information retrieval. He compares it to using a thesaurus to set a word in a broader context.

However, Tunkelang says the PARC researchers will have to make sure that the tweet-as-search-query approach doesn't collide with search engines' increasing efforts to index tweets. It wouldn't be good for a tweet to return itself as a result.

Chi says that his team is working on a platform for managing various kinds of information streams. This summer, they plan to increase the scale of the Eddi Project so it can be placed on the live Web for testing. The longer-term goal, Chi says, is to build tools that can be optimized for enterprise customers.

Self-Powered Flexible Electronics

Touch-responsive nano-generator films could power touch screens.

Touch-screen computing is all the rage, appearing in countless smart phones, laptops, and tablet computers.

|

| On a bender: This machine is testing the electrical properties of a graphene sheet. Korean researchers have incorporated these stretchy electrodes with thin-film nano-generators to make an energy-harvesting screen. Credit: Advanced Materials |

Now researchers at Samsung and Sungkyunkwan University in Korea have come up with a way to capture power when a touch screen flexes under a user's touch. The researchers have integrated flexible, transparent electrodes with an energy-scavenging material to make a film that could provide supplementary power for portable electronics. The film can be printed over large areas using roll-to-roll processes, but are at least five years from the market.

The screens take advantage of the piezoelectric effect--the tendency of some materials to generate an electrical potential when they're mechanically stressed. Materials scientists are developing devices that use nanoscale piezoelectronics to scavenge mechanical energy, such as the vibrations caused by footsteps. But the field is young, and some major challenges remain. The power output of a single piezoelectric nanowire is quite small (around a picowatt), so harvesting significant power requires integrating many wires into a large array; materials scientists are still experimenting with how to engineer these screens to make larger devices.

Samsung's experimental device sandwiches piezoelectric nanorods between highly conductive graphene electrodes on top of flexible plastic sheets. The group's aim is to replace the rigid and power-consuming electrodes and sensors used on the front of today's touch-screen displays with a flexible touch-sensor system that powers itself. Ultimately, this setup might generate enough power to help run the display and other parts of the device functions. Rolling up such a screen, for instance, could help recharge its batteries.

"The flexibility and rollability of the nano-generators gives us unique application areas such as wireless power sources for future foldable, stretchable, and wearable electronics systems," says Sang-Woo Kim, professor of materials science and engineering at Sungkyunkwan University. Kim led the research with Jae-Young Choi, a researcher at Samsung Advanced Institute of Technology.

The same group previously put nano-generators on indium tin oxide electrodes. This transparent, conductive material is used to make the electrodes on today's displays, but it is inflexible.

To make the new nano-generators, the researchers start by growing graphene--a single-atom-thick carbon material that's highly conductive, transparent, and stretchy--on top of a silicon substrate, using chemical vapor deposition. Next, through an etching process developed by the group last year, the graphene is released from the silicon; and the graphene is removed by rolling a sheet of plastic over the surface. The graphene-plastic substrate is then submerged in a chemical bath containing a zinc reactant and heated, causing a dense lawn of zinc-oxide nanorods to grow on its surface. Finally, the device is topped off with another sheet of graphene on plastic.

In a paper published this month in the journal Advanced Materials, the Samsung researchers describe several small prototype devices made this way. Pressing the screen induces a local change in electrical potential across the nanowires that can be used to sense the location of, for example, a finger, as in a conventional touch screen. The material can generate about 20 nanowatts per square centimeter. Kim says the group has subsequently made more powerful devices about 200 centimeters squared. These produce about a microwatt per square centimeter. Kim says this is enough for a self-powered touch sensor and "indicates we can realize self-powered flexible portable devices without any help of additional power sources such as batteries in the near future."

The first laptop computers!

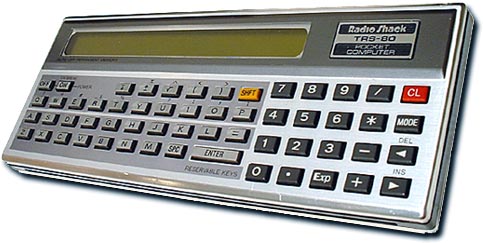

| This new TRS-80 Computer is another "first" from the company which brought you the best-selling, world renowned TRS-80. A truly pocket-sized Computer (not a programmable calculator). Of course it is an ultra-powerful calculator too... And it "speaks" BASIC - - the most common computer language, and the easiest to learn. You'll soon be impressed by the phenomenal computing power of this hand-held TRS-80 - - ideal for mathematics, engineering and business application. |

The $49.00 Cassette Interface is a requirement for the serious Pocket Computer enthusiast, otherwise there's no way to permanently save your data. Power is provided by 3 internal "AA" batteries or by an external power adapter.

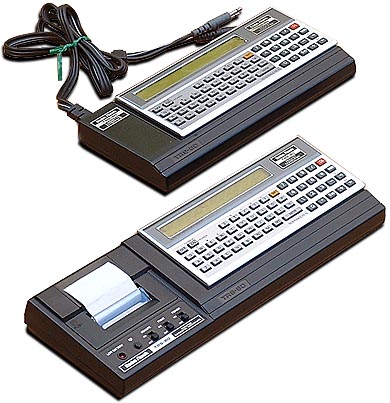

The $49.00 Cassette Interface is a requirement for the serious Pocket Computer enthusiast, otherwise there's no way to permanently save your data. Power is provided by 3 internal "AA" batteries or by an external power adapter.For those who prefer hardcopy, the $149.00 Printer/Cassette Interface is superb. It is a miniature dot-matrix printer (not a thermal printer) as well as a Cassette Interface for data storage/retrieval.

Built-in rechargeable Ni-Cd batteries supplies power when the external power adapter is not being utilized. Unfortunately, the printer prints 16 characters-per-line, while the PC-1 display is 24 characters wide.

The TRS-80 PC-1 is the first-ever BASIC-programmable pocket-sized computer! It's actually the Sharp PC-1211, sold by Radio Shack in the US.

It takes deep-pockets to hold the PC-1, but not to buy one. Costing only $230, it was very portable and useful.

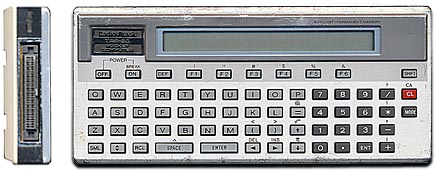

A couple of years later, in 1982, Radio Shack released the PC-2. It offers many improvements over the PC-1, but look - it's huge by comparison!

A couple of years later, in 1982, Radio Shack released the PC-2. It offers many improvements over the PC-1, but look - it's huge by comparison! Larger and heavier (400g vs. 170g), it offers a more powerful BASIC and up to 8K RAM when using the RAM/ROM module slot on the back.

"Click" on the PC-2 for more information.

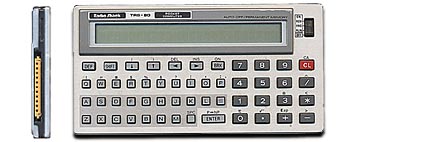

In 1983, the PC-3 appeared at your local Radio Shack store. Ah, now that's a pocket-sized computer!

In 1983, the PC-3 appeared at your local Radio Shack store. Ah, now that's a pocket-sized computer!A new record in computer miniaturization, it is very slim and light, only 105g, or 3.8 oz.

"Click" on the PC-3 for more information.

Watch Google Maps in 3D Perspective

Google product manager Peter Birch noted in the blog post, "Earth view offers a true three-dimensional perspective, which lets you experience mountains in full detail, 3D buildings, and first-person dives beneath the ocean." New Earth View for Google Maps provides a fly-through interface. However, you might face some trouble viewing buildings from odd angles. Use the scroll wheel of the mouse to zoom in or out. To tilt the view from perpendicular to oblique, press and hold the Control key and drag map.

Earth View uses the same technology that has been used in the Google Earth application. The only thing that is different is that Google Engineers have made Earth View more fluid for the web browser to handle the 3D perspective.

Now, just for kicks, imagine what Google can do by combining existing Google Maps technologies. This means Google Earth View, Street View and Shop View will like take you places which you haven't even imagined. For instance you'd be checking out a fishing gear in Queensland based store while sitting in your house in Boston. Sounds interesting, doesn't it? Let's see if Microsoft's Bing Maps can come up with something similar anytime soon.